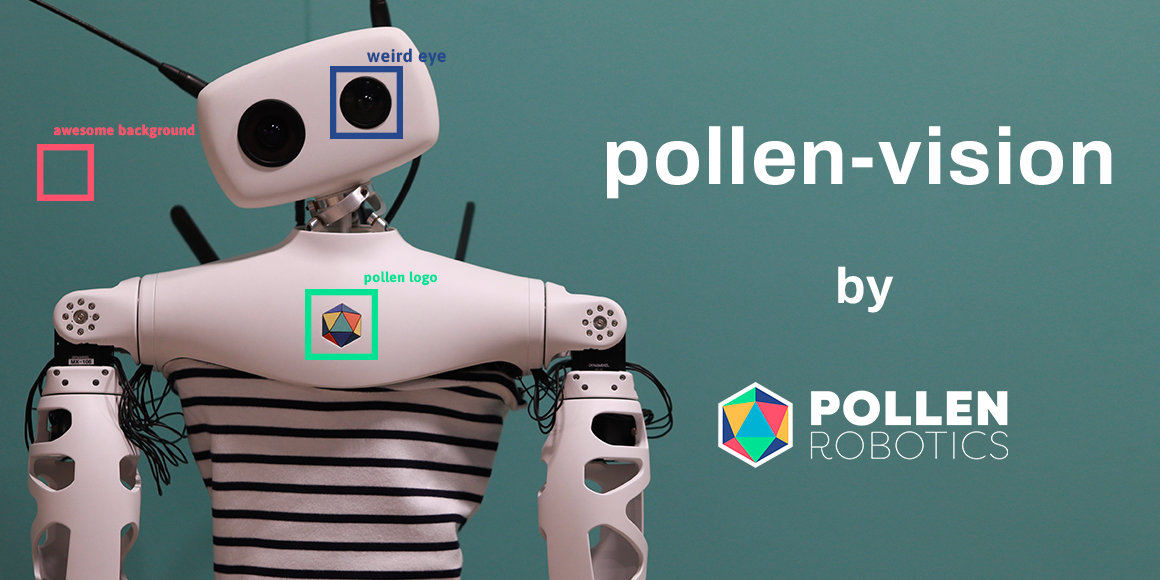

Pollen-Vision: Unified interface for Zero-Shot vision models in robotics

What Happened

Pollen-Vision: Unified interface for Zero-Shot vision models in robotics

Our Take

honestly? this whole 'unified interface' spiel for zero-shot vision models in robotics sounds like a massive PR move. we're drowning in abstractions, and right now, it's just more layers on top of existing frameworks. sure, it might simplify the API calls, but unless they actually solve the messy problem of domain adaptation and sensor fusion in real-world, messy physical environments, it's just academic fluff. i'd bet money they'll slap a $10k price tag on it before anyone truly leverages it outside of sandbox testing.

look, the real bottleneck isn't the interface; it's getting reliable, low-latency data from real-world robotics hardware. until they show us how this cuts down the actual training and deployment time on actual hardware, i'm skeptical.

What To Do

evaluate if the interface delivers tangible performance gains over existing modular setups in real robotics environments.

Cited By

React

Get the weekly AI digest

The stories that matter, with a builder's perspective. Every Thursday.