Why Go Dominates High-Throughput Backend Services

AI agent runtimes need high-fan-out, low-overhead concurrency: tool calls, streaming, retries, telemetry. Go is the default for the agent control plane — here is why, with numbers.

Go powers Kubernetes, Docker, Terraform, and most of the cloud-native infrastructure stack. It is the primary language for backend services at companies processing millions of requests per second. This adoption is not accidental — it reflects a specific set of engineering trade-offs that favor production systems over developer convenience.

The core insight behind Go's design is that software is read far more often than it is written, and maintained far longer than it is developed. Go sacrifices expressive syntax (no generics until recently, no inheritance, limited metaprogramming) in exchange for readability and simplicity. A Go codebase written by a team of 50 engineers looks largely the same as one written by 5, because the language does not provide the flexibility to diverge.

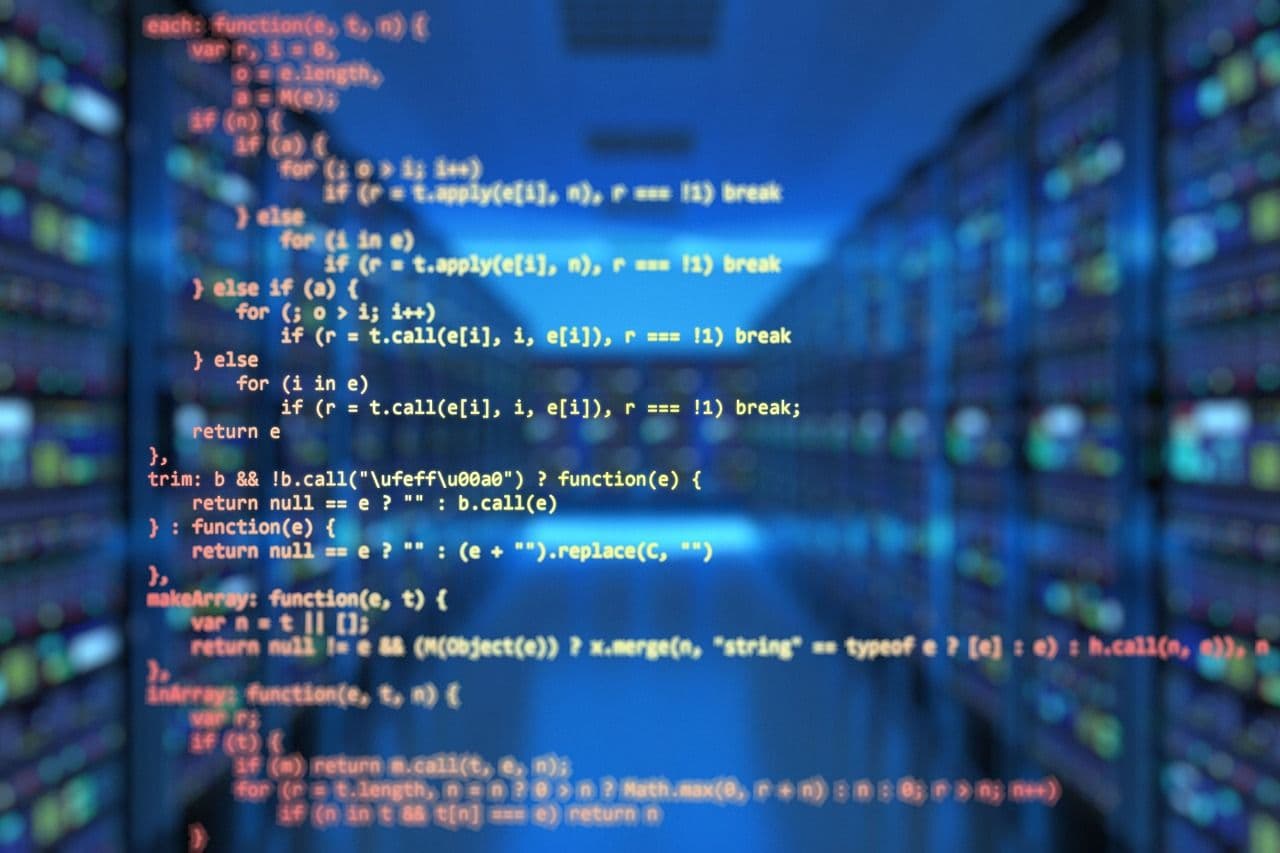

Performance Characteristics

| Dimension | Go | Node.js | Python | Java | Rust |

|---|---|---|---|---|---|

| Compilation | Fast (seconds) | Interpreted | Interpreted | Slow (minutes) | Slow (minutes) |

| Memory usage | Low-medium | Medium-high | High | High | Very low |

| Concurrency model | Goroutines (M:N) | Event loop (single-threaded) | GIL-limited | Threads (OS-level) | async/threads |

| Latency (p99) | Excellent | Good | Poor | Good (after warmup) | Excellent |

| Throughput | Excellent | Good | Poor | Excellent | Excellent |

| Binary size | Single binary | Runtime required | Runtime required | JVM required | Single binary |

Goroutines: The Concurrency Advantage

Go's goroutines are lightweight user-space threads managed by the Go runtime scheduler. You can spawn millions of goroutines on a single machine — each starts with a 2KB stack that grows as needed. By contrast, OS threads (used by Java and most other languages) start with 1MB stacks and are limited to thousands per process. This means Go services can handle massive concurrent connection counts without the complexity of async/await patterns or the limitations of single-threaded event loops.

For AI-serving infrastructure — API gateways that fan out to multiple model endpoints, orchestration services that coordinate multi-agent workflows, or streaming services that maintain thousands of concurrent SSE connections — Go's concurrency model provides the right abstraction with minimal overhead.

Go in the AI Infrastructure Stack

AI applications have created new demand for Go in the infrastructure layer. The systems that orchestrate AI workflows — load balancers that route requests to model endpoints, streaming servers that handle Server-Sent Events for real-time AI responses, embedding pipeline workers that process document batches — all benefit from Go's combination of high throughput, low latency, and operational simplicity.

- API gateways with model routing and load balancing

- Streaming servers for real-time AI responses (SSE, WebSocket)

- Worker pools for batch embedding and inference pipelines

- Service mesh sidecars for AI-specific observability

- CLI tools for ML pipeline orchestration

- gRPC services for internal model serving coordination

When Not to Use Go

Go is not the right choice for everything. Data science and ML model development belong in Python — the ecosystem is insurmountable. Frontend applications belong in TypeScript. Systems programming with zero-cost abstractions belongs in Rust. Go's sweet spot is the backend service layer: API servers, workers, infrastructure tooling, and the orchestration code that connects AI models to production systems.

Adopting Go for Backend Services

Pick the next backend service that needs to be built and write it in Go. Do not rewrite an existing Python or Node.js service — migration projects have high overhead and low morale impact.

Go gives you flexibility in project layout. Pick a structure (standard Go project layout or Ben Johnson's approach) and enforce it across all services. Consistency across services is Go's biggest team productivity multiplier.

Go's testing standard library and table-driven test pattern produce highly readable, maintainable test suites. Establish the pattern early and it scales naturally.

Go interfaces are implicit (no implements keyword). Define interfaces where services interact with external systems (databases, APIs, message queues) for testability and flexibility.

“Go is boring by design. In production systems, boring is a feature. Every clever language feature your team does not have to debug at 3 AM is time and energy saved for solving actual business problems.”

Frequently Asked Questions

Why does Go dominate high-throughput backend services?

Go's dominance in high-throughput backends comes from: a lightweight goroutine scheduler that handles hundreds of thousands of concurrent connections efficiently, a fast garbage collector optimized for low-latency workloads, simple compilation to a single static binary, and a standard library that includes a production-quality HTTP server. The language design prioritizes operational simplicity over developer expressiveness.

When should I use Go vs Node.js or Python for a backend service?

Use Go for: CPU-bound processing, services requiring consistent low-latency under high concurrency, network proxies and gateways, and services where memory footprint and startup time matter. Use Node.js when: your team is JavaScript-heavy, the workload is I/O-bound, or ecosystem compatibility with npm packages is required. Use Python for: ML inference, data pipelines, and scripting-heavy services where development speed trumps runtime performance.

What are the most common Go backend performance mistakes?

Go performance mistakes: goroutine leaks from channels or context cancellation not properly handled, unnecessary allocations in hot paths preventing GC pressure reduction, misuse of sync.Mutex causing contention, HTTP client without connection pooling configuration, and misunderstanding how the Go scheduler handles blocking system calls versus I/O-bound goroutines.

How does Go handle concurrency compared to async/await in Node.js?

Go uses goroutines (green threads) managed by the runtime scheduler — you write sequential code and the runtime handles concurrency. Node.js uses an event loop with async/await — you explicitly mark async boundaries. Go's model is easier to reason about for complex concurrent workflows; Node.js requires careful management of the event loop to avoid blocking.

Is Go worth learning for backend development in 2026?

Yes. Go is the dominant language for infrastructure software, cloud-native services, CLIs, and performance-critical backends. It is the primary language for Kubernetes, Docker, Prometheus, and most major cloud-native tooling. For backend engineers, Go proficiency opens roles in infrastructure, platform engineering, and any team running high-throughput services.

Need this kind of thinking applied to your product?

We build AI agents, full-stack platforms, and engineering systems. Same depth, applied to your problem.

Enjoyed this? Get the weekly digest.

Research highlights and AI news, delivered every Thursday. No spam.