What’s going on with the Open LLM Leaderboard?

What Happened

What’s going on with the Open LLM Leaderboard?

Fordel's Take

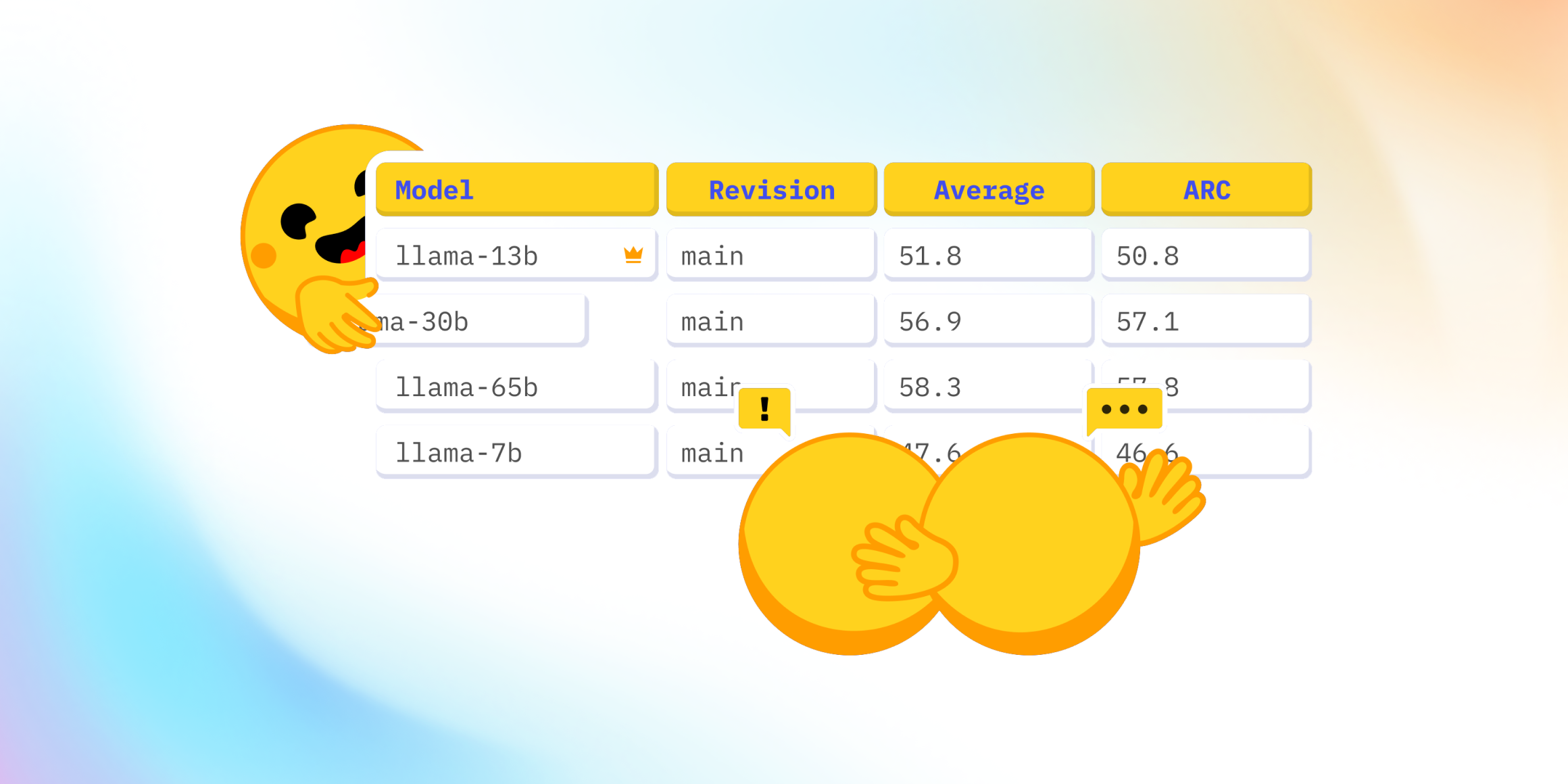

Hugging Face yanked 30 models off the Open LLM Leaderboard after catching systematic prompt injection attacks that inflated MT-Bench scores by up to 12%. The cheat was as simple as stuffing the system prompt with hidden instructions telling the judge LLM to rate responses higher.

The leaderboard was your shortcut for picking base models; now every download risks a Trojan horse that tanks RAG performance in production. Running Llama-3-8B because it “ranked #2” is just gambling with 4 GPU-hours of your fine-tuning budget on a model that might hallucinate 40% more than the clean 7B that sits at #15.

Teams shipping customer-facing agents this quarter need to re-run their own evals—everyone else can keep trusting the old offline numbers.

What To Do

Pin to the 2024-05-01 snapshot and test against your own validation set instead of trusting live rankings because prompt-injection hacks make the current board worse than random

Cited By

React

Get the weekly AI digest

The stories that matter, with a builder's perspective. Every Thursday.