Smaller is better: Q8-Chat, an efficient generative AI experience on Xeon

What Happened

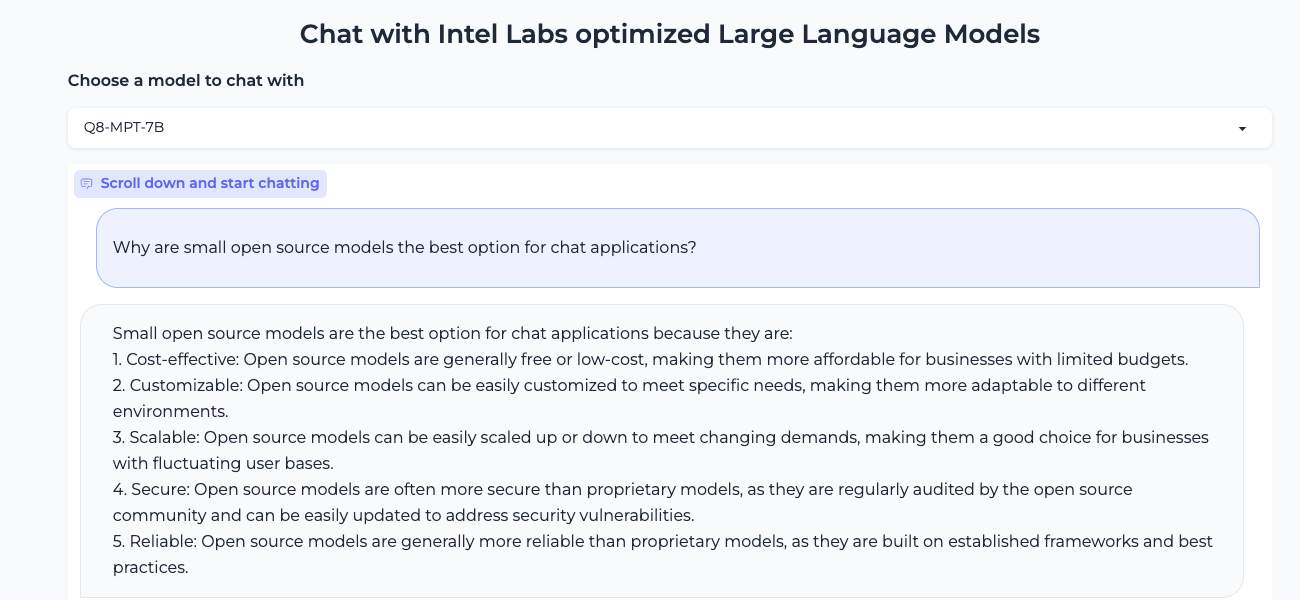

Smaller is better: Q8-Chat, an efficient generative AI experience on Xeon

Fordel's Take

smaller is better is old news, but it's still the only reality for running inference on constrained hardware. q8-chat is just a clever quantization trick; it means we can squeeze a bigger model onto cheaper hardware like a Xeon without sacrificing too much quality. it's about making the impossible possible on limited silicon.

the real win isn't the speed boost on a Xeon; it's the reduction in the operational cost. running a massive LLM on an A100 costs thousands in compute time; running a Q8-Chat variant on decent server hardware cuts that cost down significantly.

we need to stop treating 'smaller' as a theoretical ideal and start treating it as a deployment necessity. it's less about the math and more about making the infrastructure affordable.

What To Do

Prioritize deploying quantized models like Q8-Chat on existing enterprise hardware before looking at new GPU clusters. impact:high

Cited By

React

Get the weekly AI digest

The stories that matter, with a builder's perspective. Every Thursday.