Scaling up BERT-like model Inference on modern CPU - Part 2

What Happened

Scaling up BERT-like model Inference on modern CPU - Part 2

Fordel's Take

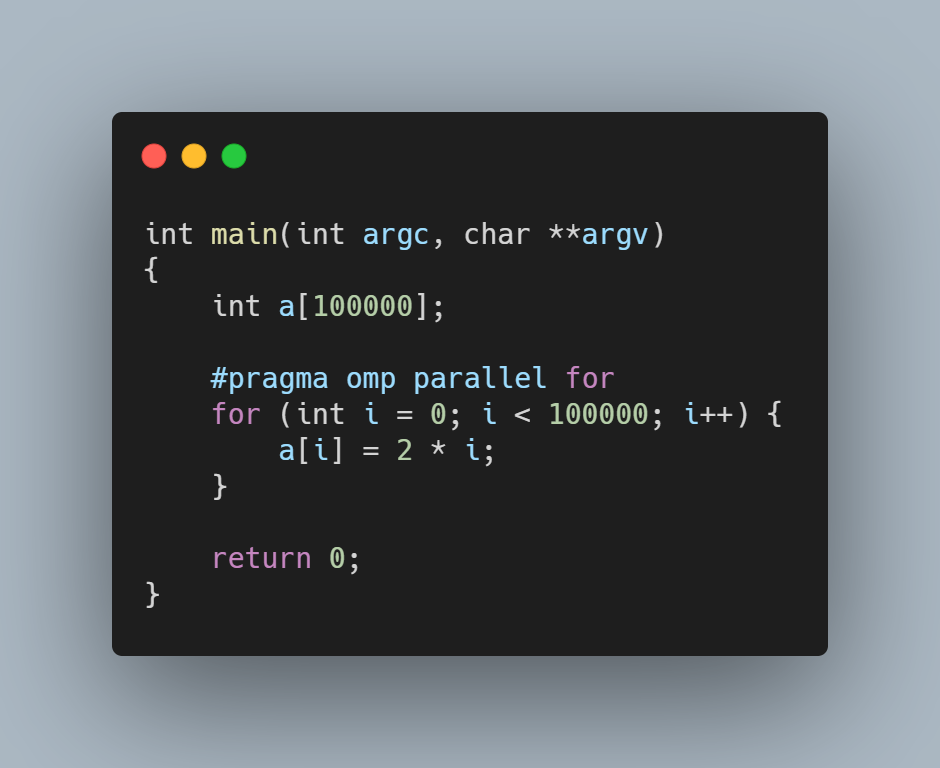

scaling BERT-like models on modern CPUs is less about achieving theoretical limits and more about relentless engineering of memory layout and batching. it's a classic case of pushing the limits of L3 cache and minimizing external memory access, which is where the real performance difference shows up. the scaling just means you need smarter software, not faster silicon.

we're not seeing exponential gains anymore just by throwing more cores at it. the bottleneck has shifted entirely to memory bandwidth and instruction-level parallelism. you need kernels specifically tuned for vector instructions to get serious throughput from those modern cores.

look, if you're running into CPU inference walls, stop thinking about model size and start thinking about cache locality.

What To Do

rewrite inference kernels to maximize cache utilization. impact:high

Cited By

React

Get the weekly AI digest

The stories that matter, with a builder's perspective. Every Thursday.