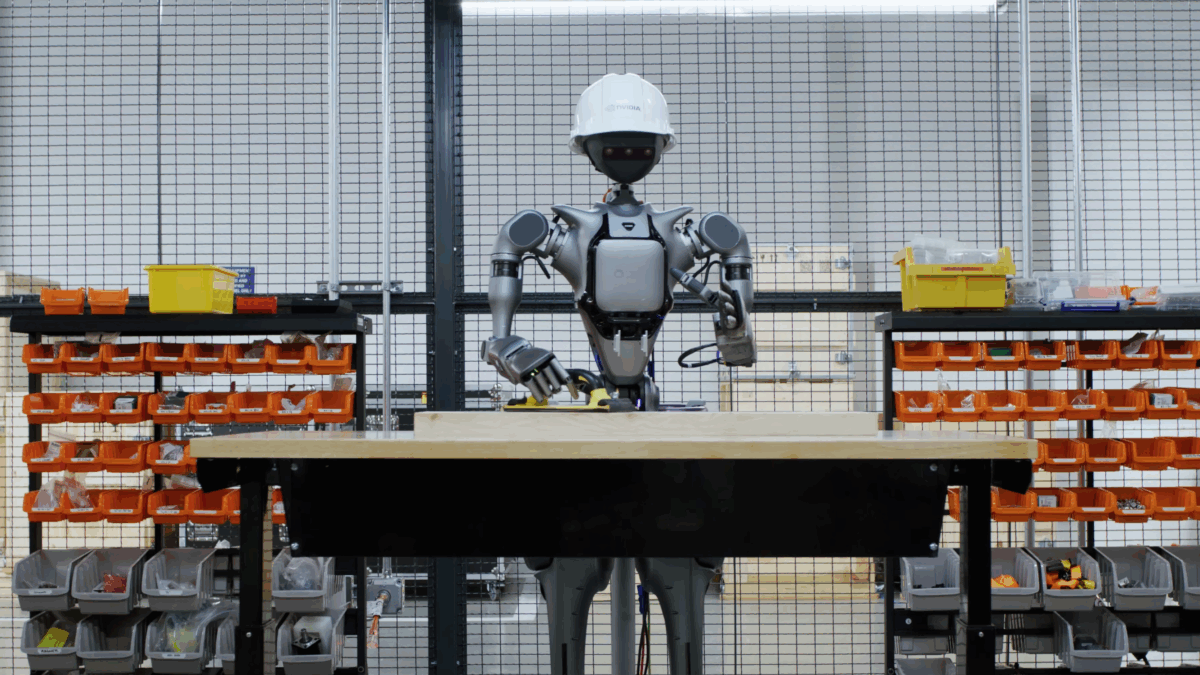

Nvidia wants to scale robot simulation training with Lyra 2.0

What Happened

Nvidia researchers have unveiled Lyra 2.0, a system that generates large, coherent 3D environments from a single photograph. The resulting scenes can be explored in real time and used directly in robot simulations. The article Nvidia wants to scale robot simulation training with Lyra 2.0 appeared fi

Our Take

Nvidia's Lyra 2.0 converts a single photograph into a navigable 3D environment usable in real-time robot simulations. No LIDAR, no manual asset creation — one image in, explorable scene out.

Simulation data scarcity is the actual bottleneck for robotics foundation models, not model architecture. Teams using Isaac Sim spend weeks on environment curation; Lyra 2.0 directly targets that gap. Developers building embodied AI are over-indexing on real-world datasets and ignoring how much synthetic environment diversity drives sim-to-real transfer performance.

Robotics teams running sim-to-real pipelines on Isaac Lab or MuJoCo need to watch the release timeline. Pure-software teams building RAG or language agents can ignore this entirely.

What To Do

Use Lyra 2.0 to generate environment variants for Isaac Sim instead of hand-curating scenes because synthetic diversity matters more than data realism at robotics training scale.

Builder's Brief

What Skeptics Say

Single-image 3D reconstruction produces plausible-looking geometry, not physically accurate geometry. Robots trained on scenes that look right but behave wrong will fail sim-to-real transfer worse than teams using sparse but accurate LIDAR data.

Cited By

React

Get the weekly AI digest

The stories that matter, with a builder's perspective. Every Thursday.