Introducing Snowball Fight ☃️, our first ML-Agents environment

What Happened

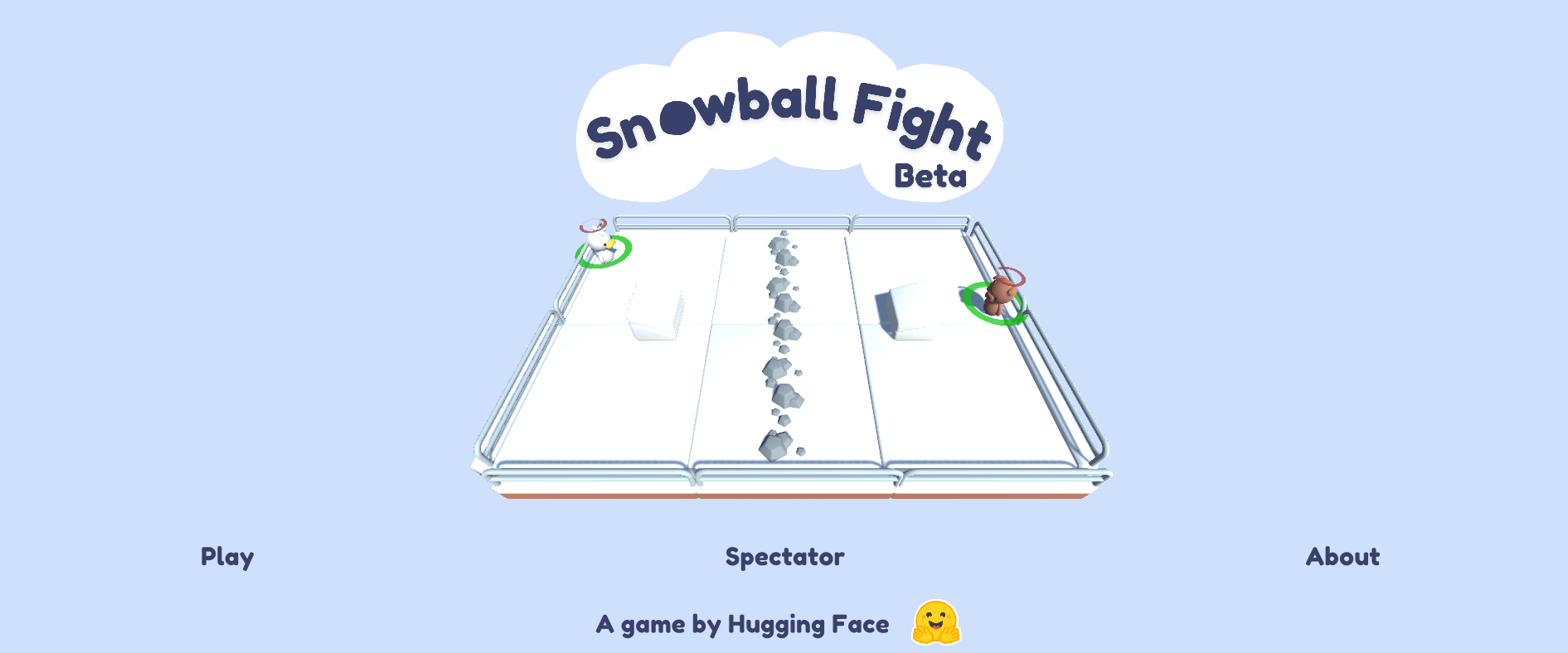

Introducing Snowball Fight ☃️, our first ML-Agents environment

Our Take

Unity ML-Agents shipped Snowball Fight as its first official multi-agent competitive environment. Two teams of agents throw snowballs using ray-cast observations and discrete action spaces — no vision stack, no complex reward shaping.

Self-play environments eliminate the need for handcrafted dense reward functions, which cuts iteration cost significantly on NPC behavior projects. Most ML-Agents users waste weeks tuning reward signals when competitive self-play would converge the same policy faster. Running reward-engineered training for competitive agent tasks is just burning GPU time.

Game AI teams prototyping competitive NPC behaviors should fork this env and swap the domain. Developers doing single-agent RL or anything outside Unity can skip it entirely.

What To Do

Use ML-Agents Snowball Fight as a self-play template instead of building a competitive env from scratch because the observation schema and team reward structure are already wired.

Cited By

React

Get the weekly AI digest

The stories that matter, with a builder's perspective. Every Thursday.