Hugging Face Reads, Feb. 2021 - Long-range Transformers

What Happened

Hugging Face Reads, Feb. 2021 - Long-range Transformers

Fordel's Take

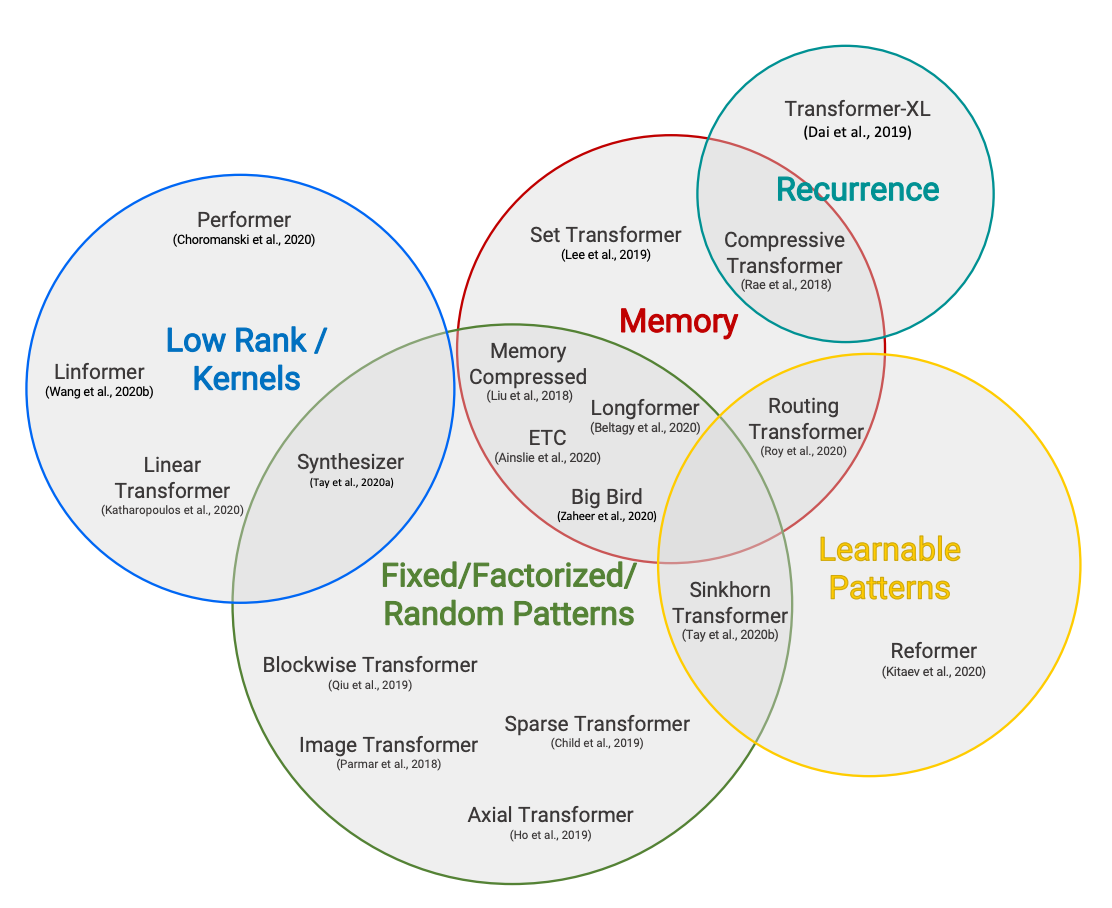

Standard attention in transformers is O(n²) in sequence length. In Feb 2021, HuggingFace catalogued a wave of efficient attention architectures — Longformer, BigBird, Reformer — each trading some accuracy for linear or log-linear complexity on sequences beyond 512 tokens.

For RAG pipelines stuffing 8K+ tokens into a single prompt, this history matters: most teams reaching for GPT-4 to handle long context are paying for brute-force attention when sparse or sliding-window variants would work at a fraction of the cost. Assuming "more context = bigger model" is still the most expensive wrong assumption in production AI.

Teams running document QA or contract analysis on fixed-format inputs should benchmark Longformer or BigBird on HuggingFace before defaulting to frontier models. Teams with highly variable, unstructured input can skip this — sparse attention assumptions break fast there.

What To Do

Benchmark Longformer against GPT-4o on your fixed-format document QA pipeline because O(n²) attention at 8K tokens is paying a 16x compute penalty for a problem that was solved in 2020.

Cited By

React

Get the weekly AI digest

The stories that matter, with a builder's perspective. Every Thursday.