Back to Pulse

Hugging Face

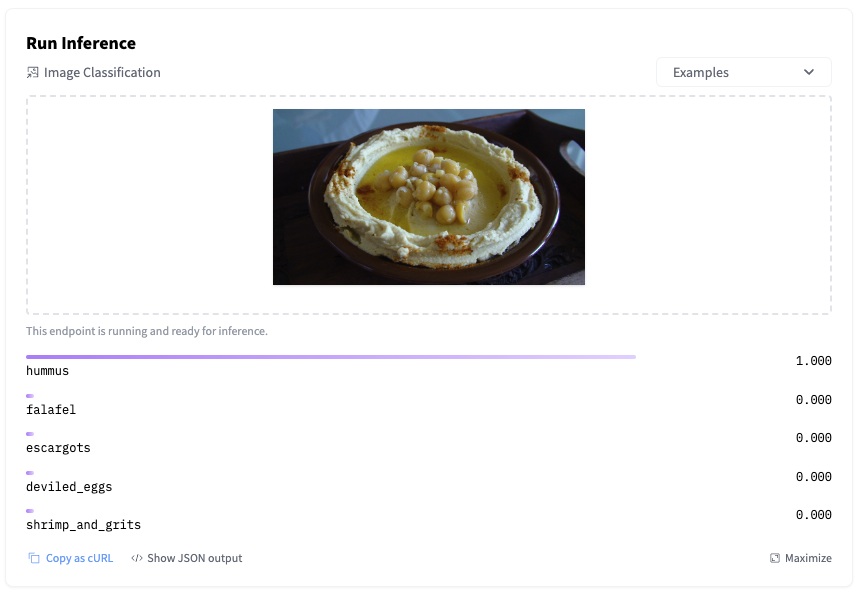

Getting Started with Hugging Face Inference Endpoints

Read the full articleGetting Started with Hugging Face Inference Endpoints on Hugging Face

↗What Happened

Getting Started with Hugging Face Inference Endpoints

Fordel's Take

Hugging Face Inference Endpoints is fine for a quick demo, but don't mistake it for a robust production solution. It’s great for prototyping, but when you hit real throughput demands—say, handling 100 concurrent requests with sub-50ms latency—you'll quickly realize you need custom Kubernetes deployments or specialized serving frameworks.

What To Do

Don't rely on HF Endpoints for mission-critical, low-latency production traffic; build your own serving layer if you need strict control over costs and performance.

Cited By

React

Newsletter

Get the weekly AI digest

The stories that matter, with a builder's perspective. Every Thursday.

Loading comments...