Few-shot learning in practice: GPT-Neo and the 🤗 Accelerated Inference API

What Happened

Few-shot learning in practice: GPT-Neo and the 🤗 Accelerated Inference API

Fordel's Take

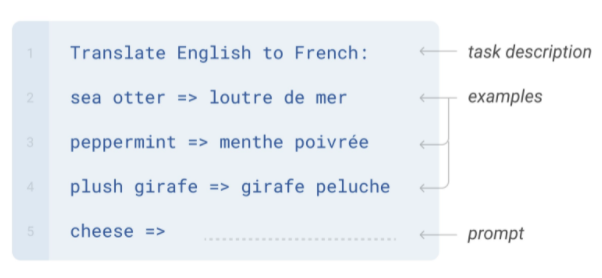

Look, few-shot learning isn't some magic trick; it's just the necessary shortcut we use when fine-tuning a big model is too expensive or slow. GPT-Neo lets us skip the heavy training upfront and just provide examples. The Accelerated Inference API is crucial because without it, running those smaller, specialized models means waiting ages for results. It’s about cutting down latency when deploying models that aren't massive LLMs. We're just making the existing tools work faster, which is the only metric that matters to me right now.

Honestly, most teams waste time trying to brute-force fine-tuning when a clever few-shot prompt gets them 80% there instantly. It's a pragmatic move for deploying specialized NLP tasks without needing an A100 cluster.

What To Do

Use the Accelerated Inference API to immediately deploy and test few-shot examples for GPT-Neo models. Impact:medium

Cited By

React

Get the weekly AI digest

The stories that matter, with a builder's perspective. Every Thursday.