Distributed Training: Train BART/T5 for Summarization using 🤗 Transformers and Amazon SageMaker

What Happened

Distributed Training: Train BART/T5 for Summarization using 🤗 Transformers and Amazon SageMaker

Fordel's Take

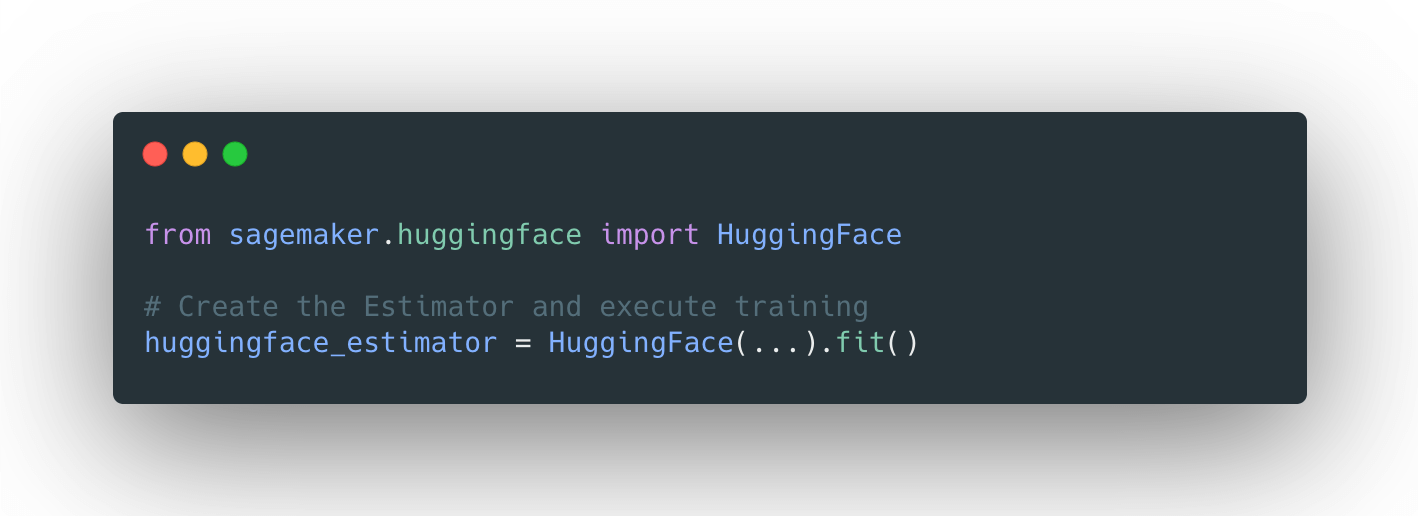

Training large summarization models like BART or T5 is inherently distributed work. Relying on SageMaker for this isn't just convenience; it's about managing the distributed complexity that normally kills projects. The real headache isn't the training code; it's coordinating the distributed GPUs and managing the data partitioning across multiple instances.

If you don't set up the SageMaker pipeline correctly, you end up with a mess of poorly synchronized training jobs. It's an MLOps problem disguised as a training problem. The benefit is getting the heavy lifting off your local machine and onto managed infrastructure, which saves us headaches during deployment and scaling.

What To Do

Standardize your distributed training pipelines using Amazon SageMaker to ensure reliable BART/T5 summarization model training. Impact:high

Cited By

React

Get the weekly AI digest

The stories that matter, with a builder's perspective. Every Thursday.