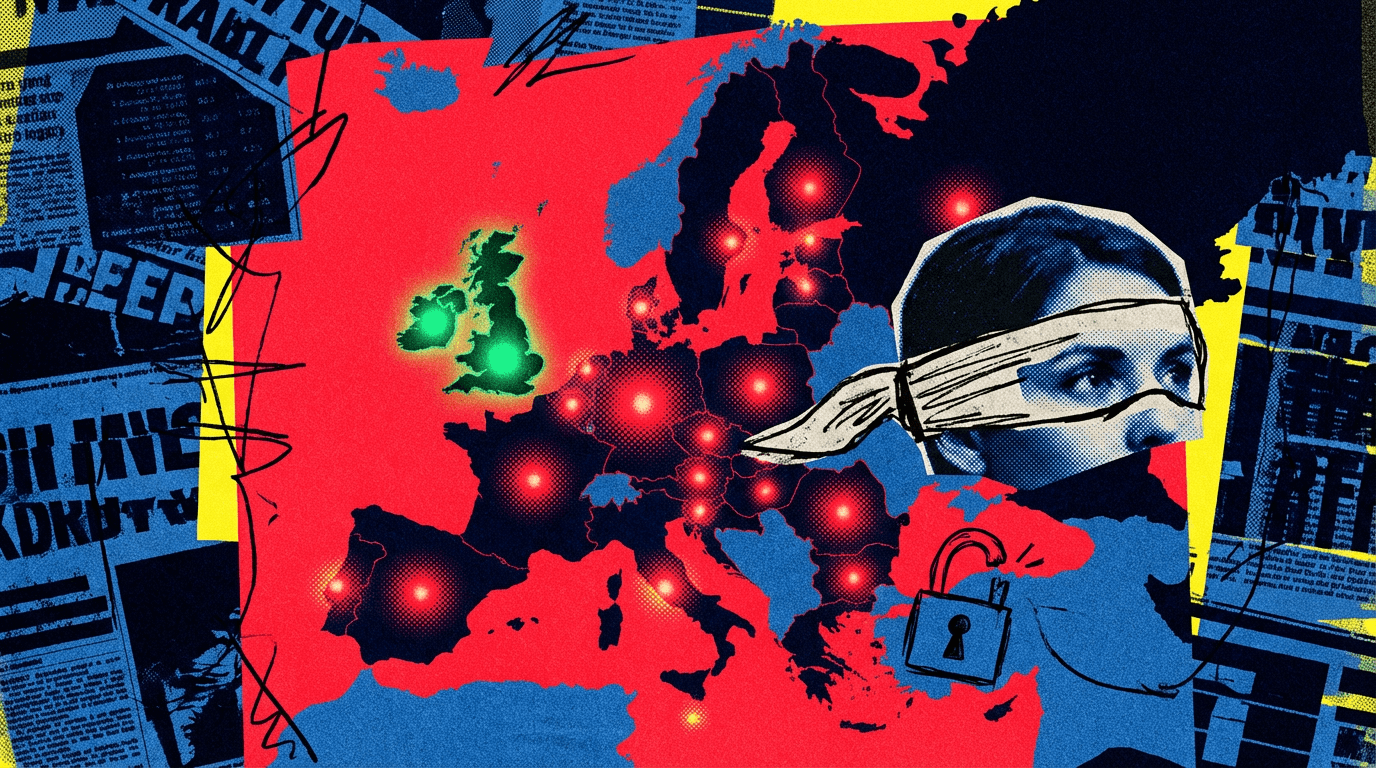

Claude Mythos is a wake-up call for Europe's AI safety apparatus

What Happened

Anthropic is restricting access to Claude Mythos, an AI model it says can find security vulnerabilities better than most humans. European authorities have almost no visibility into the system, while the UK is already running its own tests. The situation exposes a deeper structural problem. The artic

Our Take

Anthropic built Claude Mythos, described as outperforming most human security researchers at finding vulnerabilities, and is restricting access. European regulators have zero visibility; the UK AI Safety Institute is already running independent evaluations.

Security teams using AI for vulnerability research face a capability gap that standard API access can't close. Relying on Claude Sonnet or GPT-4 while Mythos sits restricted means offensive coverage is benchmarked against a floor. The EU-UK regulatory split matters less than the access asymmetry.

Dedicated red teams at security-focused orgs: apply for Anthropic's research access program now. Teams using Sonnet for standard code review are unaffected.

What To Do

Apply to Anthropic's restricted research program for Claude Mythos now instead of waiting for broad availability, because the UK safety institute's evaluation head start means public access won't arrive for months.

Cited By

React

Get the weekly AI digest

The stories that matter, with a builder's perspective. Every Thursday.