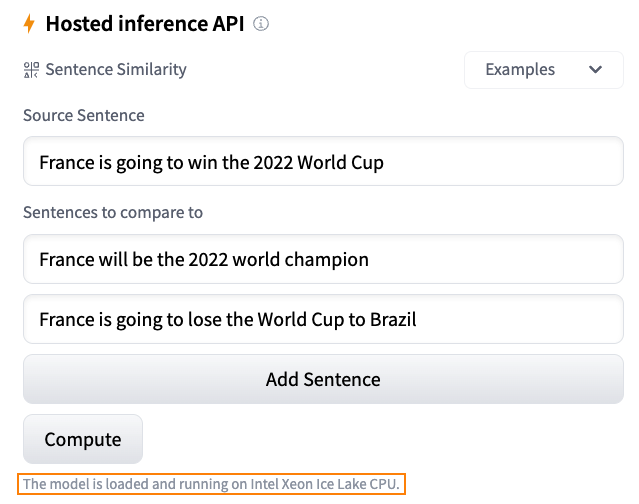

An overview of inference solutions on Hugging Face

What Happened

An overview of inference solutions on Hugging Face

Fordel's Take

Hugging Face now formalizes three inference paths: Serverless Inference API (shared, rate-limited), Dedicated Endpoints (isolated GPU instances, no cold starts), and self-hosted TGI. Each targets a different stage — prototyping, production, and scale respectively.

Teams running RAG pipelines on the Serverless API in production are trading latency predictability to avoid ~$1.30/hr for a Dedicated Endpoint. That math inverts fast at modest traffic. TGI's continuous batching pushes 4x throughput on a single A10G versus naive HF Transformers serving — most teams never benchmark this before picking a provider.

Anyone serving embeddings or rerankers in a retrieval pipeline should migrate off Serverless immediately. Pure OpenAI API shops can ignore this entirely.

What To Do

Deploy TGI on a Dedicated Endpoint instead of the Serverless Inference API for production RAG because continuous batching cuts per-query latency variance at sustained load.

Cited By

React

Get the weekly AI digest

The stories that matter, with a builder's perspective. Every Thursday.