Accelerating Stable Diffusion Inference on Intel CPUs

What Happened

Accelerating Stable Diffusion Inference on Intel CPUs

Fordel's Take

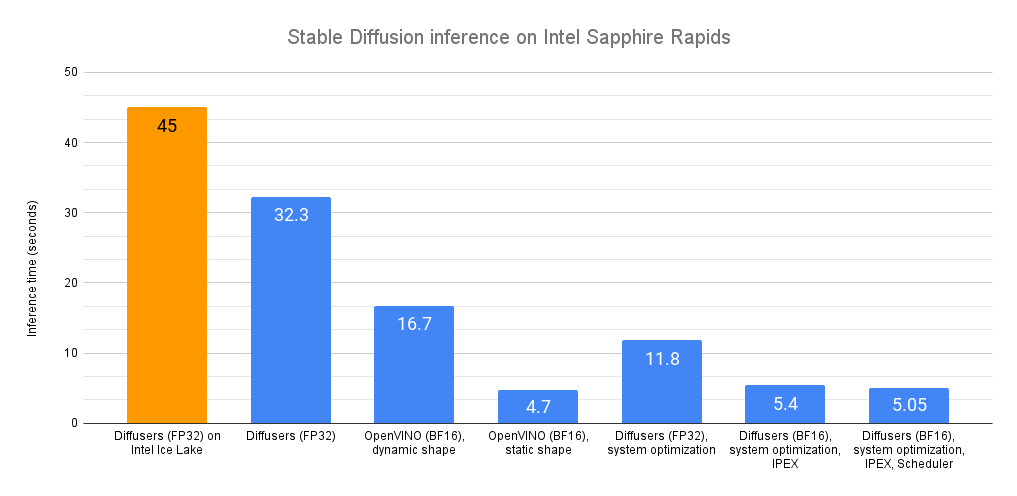

Accelerating Stable Diffusion inference on Intel CPUs? It's a classic case of finding a free hack that shifts the burden onto the generalist. It works, and it's free, which is the only selling point here. It just means you're squeezing performance out of what you already have, which is perfect for small deployments.

Don't expect massive gains. It's fine for quick proofs-of-concept or low-volume internal use. Don't try to deploy production systems relying on this; the latency trade-offs usually kill you in a real workflow.

What To Do

Test CPU inference speeds against baseline GPU performance for your specific use case.

Cited By

React

Get the weekly AI digest

The stories that matter, with a builder's perspective. Every Thursday.