Accelerating PyTorch Transformers with Intel Sapphire Rapids - part 1

What Happened

Accelerating PyTorch Transformers with Intel Sapphire Rapids - part 1

Fordel's Take

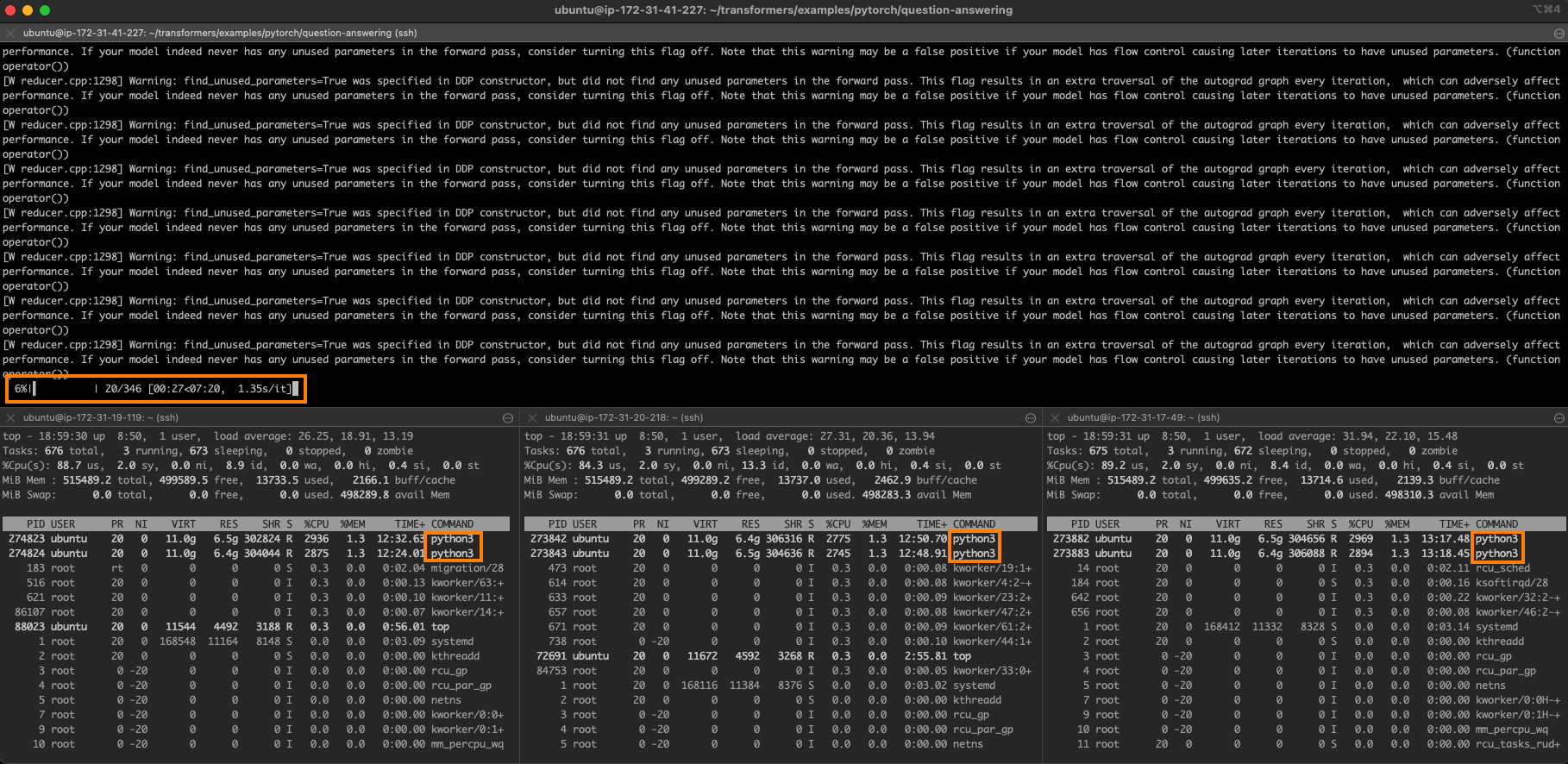

this is pure hardware engineering noise wrapped in some academic PR. intel's claim about sapphire rapids accelerating transformers is technically fine, but the real question is the actual sustained throughput and memory bandwidth when you're running massive models on actual game assets. i don't care about the specs; i care about the latency on our specific deployment pipeline.

if you're just squeezing a few extra percent out of an existing PyTorch setup, it's fine. if you're building a custom accelerator stack, then we need real benchmarks, not just press releases.

What To Do

Benchmark specific workloads against your actual deployment environment before adopting the architecture.

Cited By

React

Get the weekly AI digest

The stories that matter, with a builder's perspective. Every Thursday.