Accelerating Document AI

What Happened

Accelerating Document AI

Fordel's Take

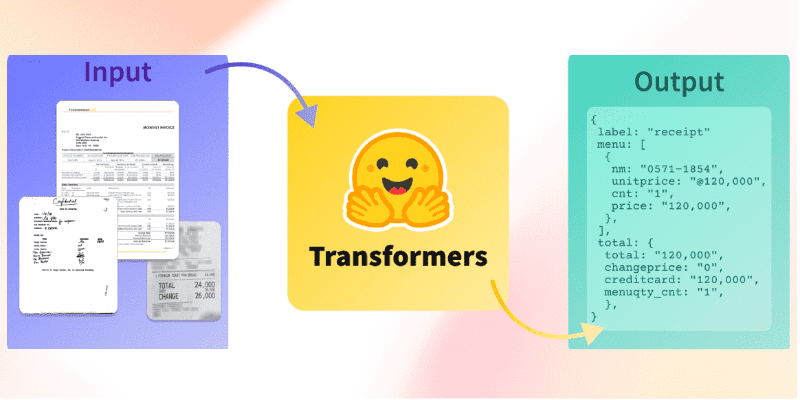

Multimodal LLMs now ingest PDFs and images directly — no OCR step required. Claude Sonnet and Gemini 1.5 Pro handle tables, mixed layouts, and scanned documents end-to-end in a single API call.

Most RAG pipelines built in 2023 still route documents through Textract or Tesseract before chunking. That adds $1.50 per 1,000 pages plus pipeline latency. Treating document parsing as a preprocessing problem is now the wrong frame — the model is the parser.

Teams running invoice or contract extraction should cut Textract from the stack entirely. Plain text PDFs still work fine with pdfplumber.

What To Do

Drop Textract from pipelines using Claude or Gemini because native multimodal ingestion eliminates per-page costs and preprocessing latency.

Cited By

React

Get the weekly AI digest

The stories that matter, with a builder's perspective. Every Thursday.