Two engineers on the same team. Both use Claude Code and Cursor every day. The first closes more tickets than anyone else on the team. Their PRs are clean, their tests pass, their code is easy to review. The second also closes tickets faster than before. Their code also looks clean. Their tests also pass. In production, their features quietly accumulate edge cases nobody tested, integrations that break under load, and security assumptions that turn out to be wrong.

AI tools raised both engineers' output volume. They did not raise both engineers' output quality.

Why the Problem Is Invisible

The manager sees closed tickets and merged PRs. Both engineers are closing more tickets and merging more PRs than they were six months ago. The manager's productivity metrics are improving across the board. What the manager cannot see without reading the code: the first engineer is using AI to produce better code faster. The second is using AI to produce plausible-looking code faster, where "plausible-looking" means it passes review and tests without actually addressing the edge cases that matter.

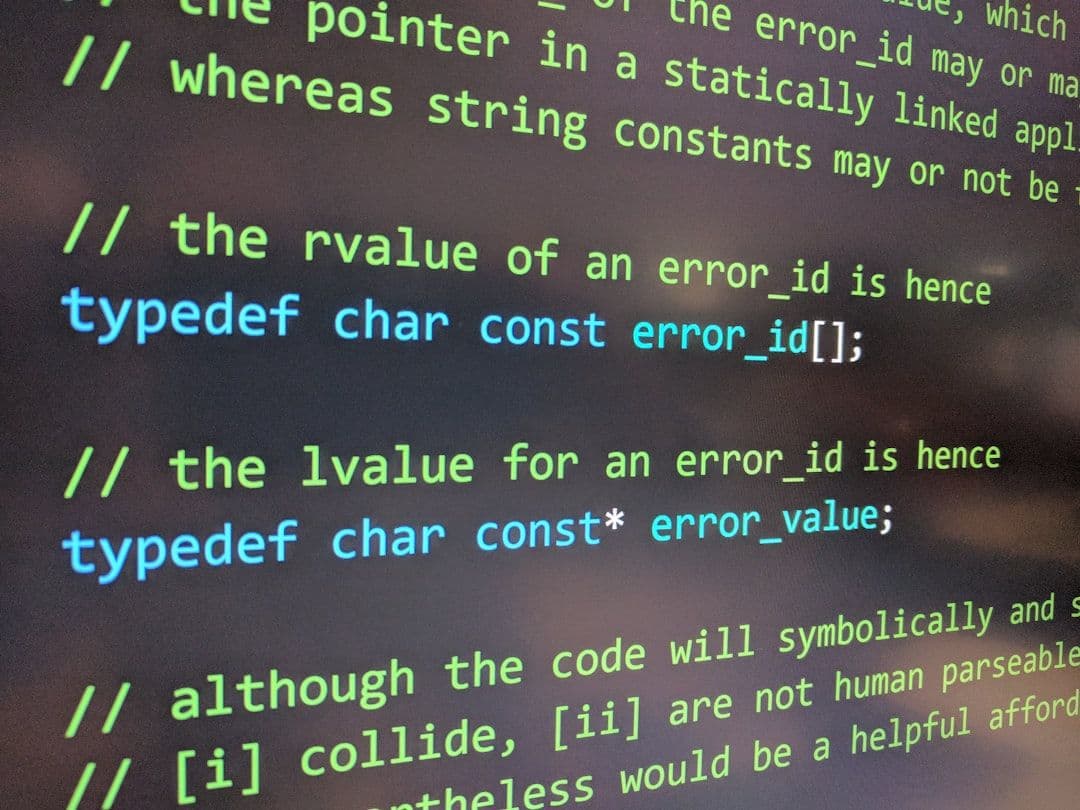

AI tools are exceptionally good at producing code that looks correct. They have been trained on millions of examples of code that was correct enough to be committed. The code they produce is syntactically clean, stylistically consistent, and passes tests that are scoped the same way the prompt was scoped. What AI cannot do is know what you did not ask it to consider. The engineer who does not think about edge cases will not ask about edge cases. The AI will not volunteer them.

“AI tools expose the quality of the engineer's thinking, not just their typing speed. Fast, shallow thinking produces fast, shallow code. The speed just makes the shallow thinking more visible in production sooner.”

The Amplification Effect

The gap between a great engineer and a mediocre one has widened with AI tools, not narrowed. The great engineer uses AI to handle the implementation details of well-scoped, thoughtfully designed solutions. They spend their thinking time on the problem that the AI cannot solve: what are the right edge cases to handle, what security assumptions are embedded in this design, what happens when this fails at scale? The AI handles the code. The engineer handles the judgment.

The mediocre engineer uses AI to handle everything: the design, the implementation, the test cases, the edge case identification. The AI does all of this for the happy path. For the happy path, the output is indistinguishable from what the great engineer produces. For everything else — the error paths, the scale failures, the security edge cases, the integration failures — the mediocre engineer's output is identically mediocre to what it was before, just delivered faster.

What Changes for Engineering Organisations

The teams that will be hurt most by AI tools are the ones that use ticket velocity as their primary engineering metric. They will see higher velocity across the board and conclude their team improved. They will see production incidents increase six months later and not understand why, because the incidents are the cost of judgment debt that was invisibly accumulated while velocity was rising.

The teams that benefit most are the ones with strong code review cultures, clear ownership, and managers who understand the code well enough to evaluate quality rather than just quantity. For these teams, AI tools do exactly what they advertise: they reduce the implementation tax on good engineering judgment, letting that judgment do more than it could before. The judgment is the product. The AI is the lever. How useful a lever is depends on what is pushing it.